Robot Learning with a Spatial, Temporal, and Causal And-Or Graph

Caiming Xiong1, Nishant Shukla1, Wenlong Xiong, Song-Chun Zhu1

Center for Vision, Cognition, Learning and Autonomy, UCLA1

Introduction

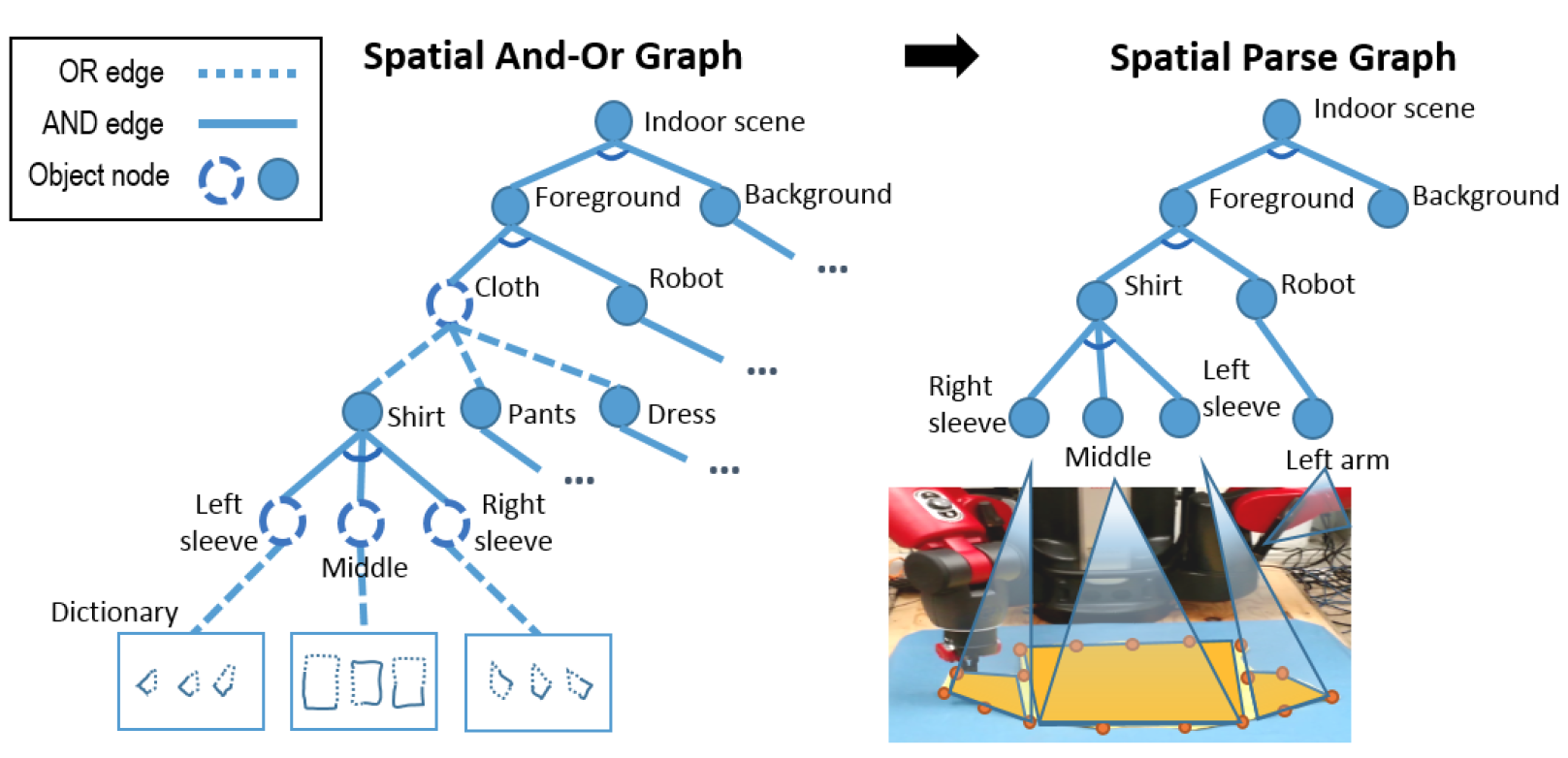

We propose a stochastic graph-based framework for a robot to understand tasks from human demonstrations and perform them with feedback control. It unifies both knowledge representation and action planning in the same hierarchical data structure, allowing a robot to expand its spatial, temporal, and causal knowledge at varying levels of abstraction. The learning system can watch human demonstrations, generalize learned concepts, and perform tasks in new environments, across different robotic platforms. We show the success of our system by having a robot perform a cloth-folding task after watching few human demonstrations. The robot can accurately reproduce the learned skill, as well as generalize the task to other articles of clothing.